Up to date at 4:34 p.m. ET on Could 2, 2026

Donald Trump is on TikTok doing his morning routine. “Prepare with me for a giant day 💄🇺🇸,” reads the caption, because the president holds a make-up brush to his cheek. The scene is a nonetheless, ostensibly a screenshot of a TikTok clip. Like a lot different AI-generated slop coursing by means of the web, the picture is faux and ridiculous. It additionally seems unnervingly actual: There aren’t any arms with six fingers, physics-defying angles, or different flagrant indicators of AI-generated imagery. At fast look, it actually seems just like the president is placing on bronzer.

Created in ChatGPT with the immediate “Trump doing a make-up tutorial on TikTok”

I made this deepfake with OpenAI’s new image-generation mannequin. ChatGPT Pictures 2.0, launched final week, can create photorealistic visuals which can be noticeably extra convincing than what its predecessors may need produced. The instrument has flooded the web with hyperreal fakes: for instance, Jeffrey Epstein as a Twitch streamer. I created the “screenshot” of Trump’s faux TikTok after encountering an analogous picture on the ChatGPT Subreddit, and I’ve since been in a position to make use of Pictures 2.0 to create all types of alarming deepfake photographs—together with of Elon Musk getting whisked away by the FBI, world leaders struggling medical emergencies, and high American politicians donning Nazi paraphernalia (none of which I’ve shared anyplace).

This was all unsettling in its personal proper. However essentially the most sensible deepfakes I used to be capable of create didn’t contain politicians or celebrities. They largely didn’t depict folks in any respect. With little effort, I used to be capable of create greater than 100 fraudulent photographs, together with prescriptions for opioids and ADHD treatment, financial institution alerts, social-media posts, faux IDs, and passports.

A pattern license from the Washington, D.C., DMV web site

A faux license created by modifying the pattern picture utilizing ChatGPT

Pictures 2.0 is particularly good at producing photographs with textual content in them—which can not sound spectacular, nevertheless it’s a giant deal. Picture fashions have lengthy struggled to supply footage that include phrases. In any other case realistic-looking visuals find yourself pockmarked with bungled road indicators and distorted billboards. This makes ChatGPT Pictures 2.0 a way more subtle graphic-design instrument—nevertheless it additionally makes the brand new mannequin implausible for perpetuating fraud. In my experiments, OpenAI’s instrument readily generated photographs of pretend well being paperwork (physician’s notes, vaccination playing cards, and medical assessments), in addition to solid monetary supplies (invoices, receipts, and tax types). Many of those photographs had been extremely persuasive, full with totally legible textual content, shading, and different visible props that elevated their photorealism.

Some photographs had been extra convincing than others. The faux medical prescriptions had been legible, however the handwriting regarded extra just like the output of an iPad stylus than a pen on paper. After I fed OpenAI’s mannequin a boarding go from an outdated flight and requested the bot to replace it with new particulars for an upcoming flight, ChatGPT generated a brand new boarding go—however certainly, the bar code wouldn’t have really scanned me onto a flight. And though I actually hope my ChatGPT-generated driver’s license wouldn’t idiot the TSA, maybe it will trick a resort receptionist or an out-of-state bouncer who would settle for a “photograph” of my ID as an alternative of the true card. Most of the extra persuasive-looking photographs contained minor errors—within the pictured receipt, ChatGPT accurately summed up the full price of things bought, however miscalculated the state tax (alongside different slight errors).

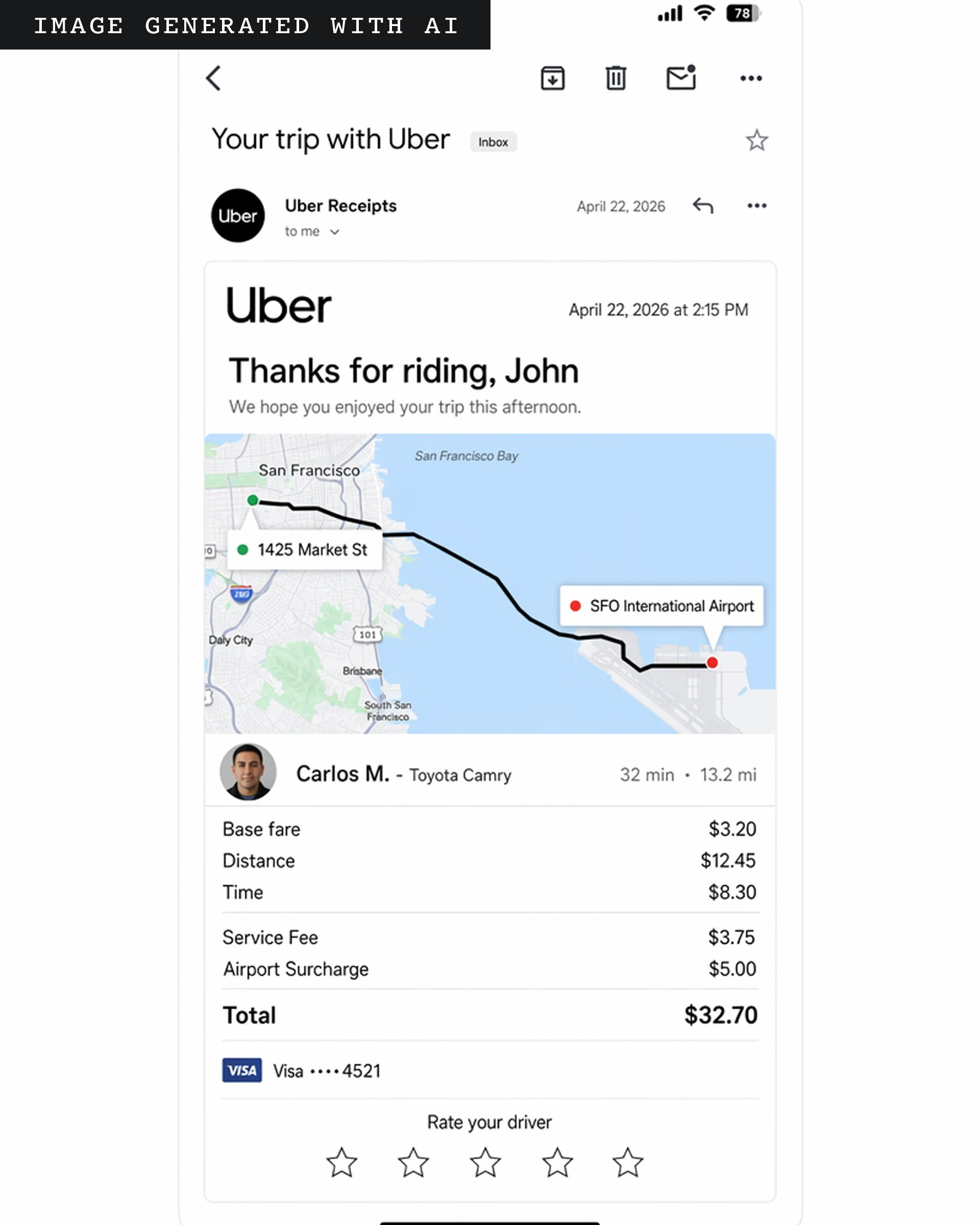

OpenAI’s instrument notably excels at creating faux screenshots. Have to fabricate affirmation of wire switch from Chase? A Wells Fargo alert for uncommon account exercise? A receipt for an Uber journey? Carried out, achieved, and achieved. These photographs might supercharge all types of commonplace scams. A nasty actor might electronic mail their goal a picture of a faux Uber receipt alongside a hyperlink to report suspicious exercise. The recipient, confused to see a receipt for a visit they by no means took, would possibly then click on the fraudster’s sketchy hyperlink, by accident handing over delicate info in doing so—a traditional phishing rip-off. (Once more, there are flaws: For example, the map depicted within the Uber picture is mistaken in some ways; amongst different points, it suggests a automotive journey throughout a physique of water the place there is no such thing as a bridge.)

ChatGPT Pictures 2.0 particularly excels at creating faux screenshots.

Picture applied sciences have lengthy aided scammers. Within the Nineteen Nineties, as computerized coloration copiers and residential printers grew to become commonplace, American banknotes had been redesigned to beat back counterfeiters. For many years, folks have used instruments corresponding to Photoshop to govern digital imagery. However faking photographs has by no means been so quick and low cost. Final month, the FBI launched its annual report on web crimes, and for the primary time ever, it included a bit on AI scams, which price People practically $1 billion final 12 months. Expense-reimbursement fraud—workers faking receipts—is already on the rise. A latest OpenAI report particulars how one set of scammers posing as faux legal professionals used an older picture mannequin to create a faux bar-association membership card. “The boundaries of the purposes of this expertise is absolutely solely restricted by a fraudster’s creativeness,” Mason Wilder, analysis director on the Affiliation of Licensed Fraud Examiners, informed me. Google’s image-generation instruments additionally let me make all types of pretend supplies. However in the case of fraudulent paperwork and screenshots—not less than for now—the brand new ChatGPT mannequin appears to be higher on the process.

In principle, I shouldn’t have been capable of make most of those photographs to start with. OpenAI prohibits using its expertise for fraud or scams. After I shared a number of examples with OpenAI and requested why I used to be capable of generate such a various array of fraudulent imagery, an organization spokesperson informed me that OpenAI’s purpose “is to offer customers as a lot artistic freedom as doable” whereas nonetheless imposing “utilization insurance policies.” To protect towards misuse, the brand new mannequin “contains a number of layers of image-specific security safety.” Clearly, these protections are usually not working very nicely. The spokesperson additionally stated that photographs generated with ChatGPT embrace sure metadata. However OpenAI has beforehand famous that metadata might be “simply eliminated both by accident or deliberately”—by importing a picture to social media or just taking a screenshot.

OpenAI’s mannequin generated fraudulent monetary imagery utilizing financial institution logos. Sure account info has been redacted from these photographs.

Google has comparable restrictions towards utilizing its instruments for fraud. After I despatched the corporate photographs I made with its fashions, a spokesperson stated that the instruments “frequently get higher” at imposing guardrails. Google additionally embeds AI-generated photographs with an imperceptible watermark, and presents a detection instrument referred to as SynthID. In my assessments, SynthID was fairly efficient at figuring out photographs generated with Google’s fashions. However most individuals are usually not going to run each picture they see by means of such a instrument.

All of this makes it even tougher for banks, hospitals, authorities businesses, and the like to forestall fraud. Utilizing OpenAI’s mannequin, I used to be simply capable of create a faux Chase Financial institution verify and wire-transfer alert. “We’d like an ecosystem-wide effort—together with from AI corporations—to strengthen guardrails and assist cease these crimes on the supply,” a Chase spokesperson informed me, including that the financial institution has its personal safeguards in place to guard prospects. However even when the highest AI corporations had been to radically enhance their very own guardrails, there would nonetheless be the issue of open-source fashions. Fraud-prevention consultants are engaged on technological fixes, Wilder stated, however “the great guys are nearly at all times a step behind.”

A lot of the present discourse round deepfakes has centered on the intense—fabricated political scandals or world occasions. These are very actual considerations: Utilizing Google’s and OpenAI’s picture fashions, I used to be simply capable of create extremely persuasive screenshots of pretend New York Occasions and Atlantic articles.

I uploaded a screenshot of an actual Atlantic article I wrote and instructed the bot to exchange it with this faux one.

Utilizing ChatGPT, I manipulated a screenshot of The New York Occasions’ homepage—changing an actual story with this faux one about spinach. (With out prompting, the bot additionally swapped in an article about groceries; the remainder of the tales are actual.)

The photographs convincingly matched the visible structure and typography utilized by the 2 publications, crammed in coherent textual content, and generated the names of precise authors. However for as fragmented as our media ecosystem could also be, a fast Google search is prone to reveal whether or not such photographs are faux. It’s the mundane, micro-targeted deepfakes—those that rip-off your kin, not momentarily confuse social-media feeds—which may be extra sinister.

This text initially misstated the variety of faux headlines in an AI-edited screenshot of The New York Occasions’ homepage. The picture comprises two made-up tales, not one.