The material resolution that defines margins

An enterprise CFO critiques AI spend: coaching payments are spiking at a hyperscaler the place they have already got contracts in place, even when their knowledge doesn’t dwell solely there; inference efficiency is lagging; and a neocloud pilot is on the desk. Solely a 12 months or two in the past, sensible selections have been principally restricted to hyperscalers; the latest explosion of AI has made neoclouds an actual choice for a lot of organizations as they mature and be taught they’ve alternate options after they hit limits on efficiency, price, flexibility, service, or GPU availability. The query isn’t whether or not to make use of a neocloud; it’s whether or not that neocloud can seize the complete AI lifecycle—coaching and manufacturing inference—or only a one-time challenge.

Throughout the market, suppliers with comparable GPU footprints are seeing very completely different outcomes. Some watch clients practice fashions on their infrastructure, then transfer manufacturing workloads elsewhere. For each greenback of coaching income retained, a number of {dollars} of higher-margin inference income stroll out the door. Others are seeing inference income rising quicker than coaching, gross margins increasing from the mid-teens towards the high-30s, and valuations that mirror sturdy platform economics fairly than commodity pricing. With hundreds of AI initiatives now underway globally, it isn’t stunning that completely different suppliers see barely completely different patterns, however clear tendencies are rising in how architectures and enterprise fashions correlate.

The distinction isn’t higher GPUs or non permanent reductions. Suppliers pulling forward have made one particular architectural wager: unified AI materials able to working coaching and inference concurrently at excessive efficiency, backed by a unified management aircraft. It is a structural resolution that compounds over time. When you select between twin materials and a unified cloth, you have got successfully chosen your margin profile.

The economics are stark. A dual-fabric supplier working separate coaching and inference infrastructures carries elevated capital and operational prices, constrained flexibility, and margins that are likely to settle within the mid-teens. A unified-fabric competitor with an analogous GPU depend handles each workloads on a single cloth—capturing inference SLAs alongside coaching jobs, shifting the enterprise combine towards higher-margin recurring income, and driving greater valuation multiples within the course of. In reasonable eventualities, the gross revenue hole between these two paths can attain tons of of tens of millions of {dollars} at scale. That hole determines who has the money stream to maintain investing—and who will get left behind in a consolidating market. That makes it important for neoclouds to ask not solely how their cloth is constructed, but in addition what share of their enterprise mannequin is tuned towards higher-margin, recurring inference versus one-off coaching initiatives.

Platform or GPU dealer?

By 2024 and 2025, the dominant neocloud pitch was simple: GPU entry at costs under hyperscalers. That differentiation nonetheless issues for a lot of shoppers, however new resolution standards are rising: Does the neocloud personal and function the GPUs? Do clients get direct entry to level-3 specialists in AI networking and GPU optimization? Can the supplier troubleshoot throughout the complete stack and provide devoted or shared GPU environments with advisory and benchmarking help earlier than a dedication? Whereas these might sound like minor factors, they turn out to be vital when a coaching or inference cluster stops working, and the query is: who can repair it, how briskly, and when?

For some segments, the pure worth hole is narrowing as the most important neoclouds and hyperscalers converge on related capability, whereas many rising neoclouds nonetheless provide considerably decrease efficient TCO as soon as service, help, storage, and microservices are included. In some areas and for some giant patrons, hyperscalers seem to have caught up on GPU provide, but many organizations with modest and even vital AI footprints nonetheless expertise shortages within the kind, timing, and placement of capability they want. Pricing continues to compress. Competing on “cheaper GPU rental” alone is a race to the underside.

The suppliers that survive by 2030 are more likely to look much less like GPU resellers and extra like built-in AI platforms—managing coaching, inference, fine-tuning, and iteration so clients can run AI as a enterprise functionality, not a one-off challenge. Platform suppliers command pricing energy and stickiness: when a buyer’s suggestion engine, fraud detection, and personalization fashions all run on built-in infrastructure, switching prices turn out to be prohibitive. They don’t re-evaluate suppliers for every new challenge. The widespread sample is obvious: the winners behave like platforms and provide differentiated providers, not purely as GPU resellers with no worth add.

The client lifecycle makes this concrete. A retailer trains a suggestion mannequin on just a few hundred GPUs and now must serve hundreds of inference requests per second with strict latency SLAs for his or her e-commerce web site. A dual-fabric neocloud can’t assure these manufacturing SLAs alongside different tenants—the shopper is steered to a hyperscaler, and the neocloud is left with a one-off coaching win and tens of millions in misplaced lifecycle income. A unified cloth neocloud deploys the identical mannequin into manufacturing on the identical infrastructure, with no second vendor, no knowledge migration, no egress charges, and no new tooling. Twelve months later, fine-tuning and new use instances land on the identical platform. Inside two years, the shopper has standardized on the platform.

Why coaching materials fail at inference

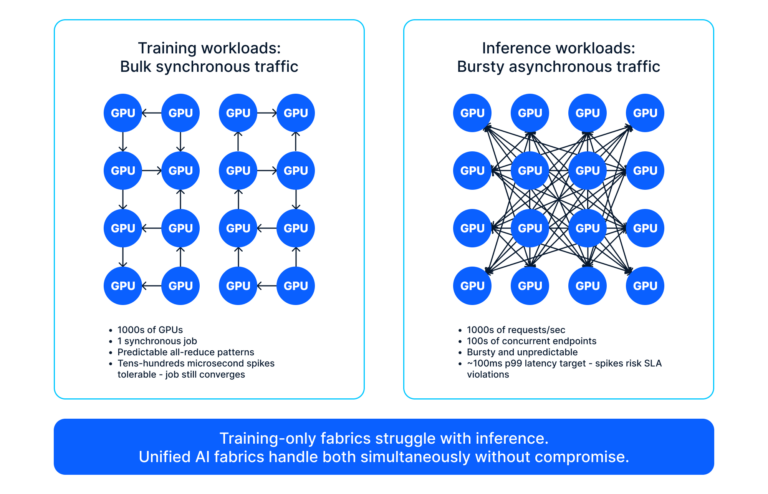

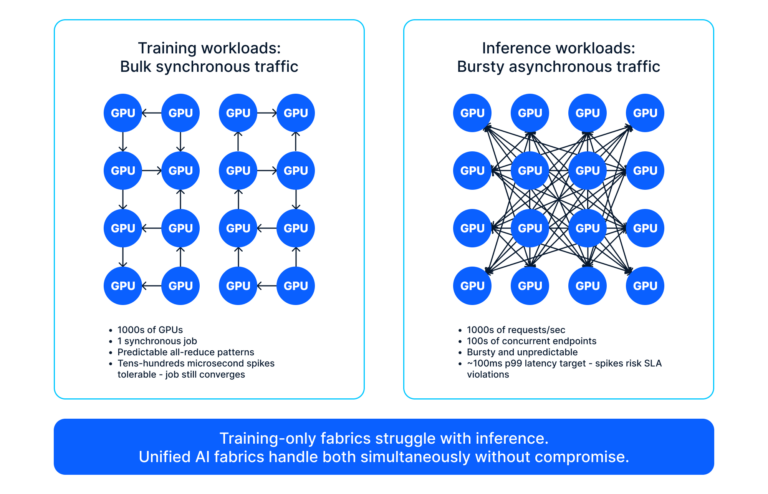

Coaching and inference characterize essentially opposed site visitors patterns flowing by the identical bodily community. Massive-scale coaching requires synchronized gradient updates throughout hundreds of GPUs—bulk, predictable, megabytes per synchronization step. The workload tolerates temporary delays; a congestion spike that extends coaching time barely is appropriate. Conventional coaching materials optimize for precisely this: adequate buffering to soak up bursts, excessive bandwidth, and congestion-aware routing.

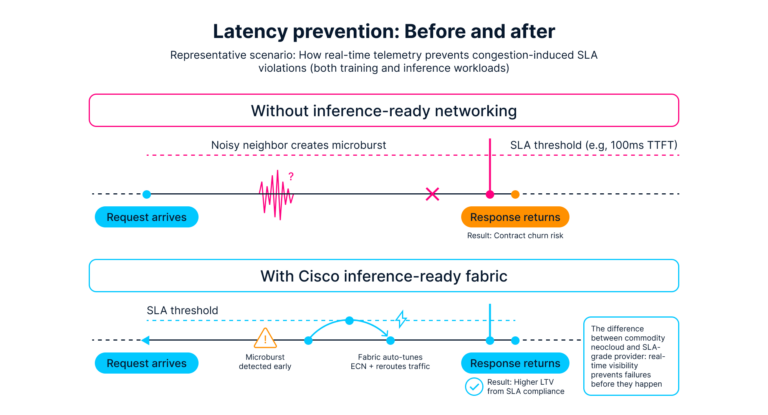

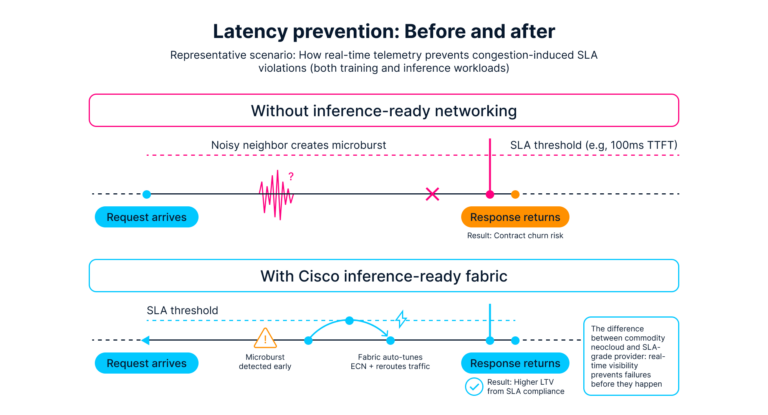

As proven in Determine 1, inference site visitors is the alternative. Requests arrive asynchronously from many purchasers at unpredictable intervals, every one small—kilobytes fairly than megabytes—and every one latency-critical. When a manufacturing utility expects 80ms and receives 200ms, SLA penalties loom. The buffering tuned for bulk coaching site visitors can add latency to small inference requests queued behind gradient bursts. Operations groups typically reply by segregating workloads onto separate racks and materials, creating two infrastructures with duplicate capital and operational overhead.

Unified cloth structure

Unified materials convey workload consciousness into the community itself. When gradient site visitors flows, the material acknowledges it as bulk synchronous communication, routes it to paths with applicable buffering, and lets it queue briefly. When inference requests arrive concurrently, the material identifies them as latency-critical and steers them onto the lowest-latency paths—defending SLAs with out ravenous coaching.

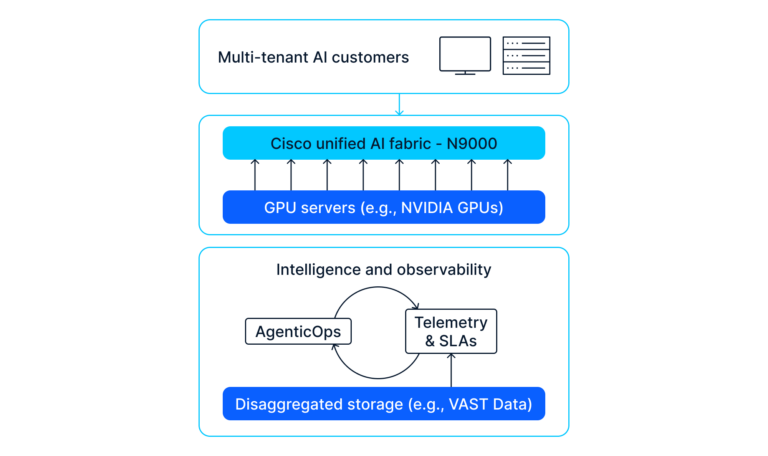

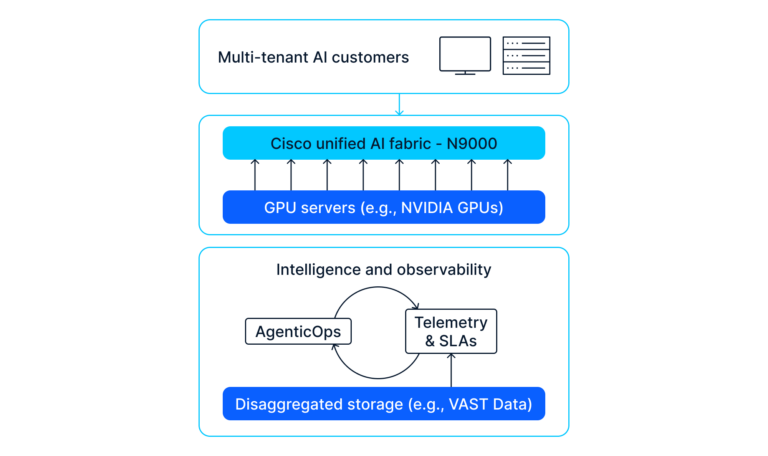

Cisco N9000 Collection Switches present silicon-level help for this mannequin: sub-5-microsecond cloth latencies for quick collective operations, RoCEv2-based lossless Ethernet with ECN and PFC for large-scale coaching, and deep shared buffers to soak up gradient bursts. On the similar time, workload-aware congestion administration and dwell in-band telemetry keep latency ensures for inference flows beneath heavy load.

On the rack degree, Cisco N9100 switches constructed on NVIDIA Spectrum-X Ethernet Silicon deal with GPU-to-GPU collectives whereas implementing per-rack isolation for multi-tenant inference. Disaggregated storage platforms resembling VAST Knowledge serve each workloads on the identical community—coaching checkpoints, mannequin repositories, and inference request knowledge—all with applicable prioritization.

Actual-time intelligence beneath load

The management aircraft determines whether or not unified cloth intelligence is usable at scale. Cisco Nexus One and Cisco Nexus Dashboard present a unified administration layer—centralizing telemetry, automation, and coverage enforcement—so multi-tenant AI clusters function as a single platform fairly than a patchwork of domains.

Think about the stress check: a big pre-training job working throughout hundreds of H100-class GPUs, with inference endpoints serving manufacturing fashions for dozens of enterprise clients concurrently. A buyer’s utility goes viral; inference request charges soar two orders of magnitude in beneath a minute.

On a training-optimized cloth, the sequence is acquainted: inference site visitors floods into gradient bursts; P99 latency blows previous SLA thresholds, timeouts cascade, and incident channels gentle up. Even after the coaching job is throttled, the harm to SLA metrics and buyer belief is finished.

On a unified cloth with Cisco Nexus One because the management aircraft, the response is automated. In-band telemetry surfaces the site visitors shift; the material auto-tunes insurance policies: inference site visitors receives precedence lanes, coaching site visitors shifts to alternate paths with deeper buffering, and specific congestion notifications information coaching senders to briefly cut back price. The coaching job’s all-reduce time will increase solely marginally—inside convergence tolerance—whereas inference stays inside its P99 SLA. No guide intervention. No SLA violation. The operations crew watches the whole lot on a single dashboard: coaching convergence metrics, inference latency distributions per tenant, and the material’s personal actions.

The price of delay

A supplier working separate materials would possibly inform itself that unified cloth can await the following budgeting cycle. In the meantime, a competitor deploys unified cloth this 12 months. Inside just a few quarters, that competitor begins capturing clients whom the primary supplier skilled however couldn’t serve in manufacturing. Their margins enhance. Their subsequent funding spherical costs in platform economics, not commodity pricing.

By the point the primary supplier decides to behave, tens or tons of of tens of millions might already be tied up in twin materials. Retrofitting unified cloth turns into a multi-year migration as a substitute of a clear construct—and through that window, probably the most precious clients are signing multi-year platform agreements with another person.

The market is consolidating. The window to guide fairly than observe is slim. For neocloud CEOs, CTOs, and infrastructure leads, the material resolution made this 12 months will decide whether or not your group turns into a differentiated AI platform or stays a GPU dealer in a market that now not rewards commodity capability.

Unified networks: The strategic selection

Cisco works with neoclouds and progressive suppliers worldwide to construct safe, environment friendly, and scalable AI platforms that ship outcomes throughout all the mannequin lifecycle. Detailed AI cloth white papers, design guides, and associate reference architectures—with full metrics, check knowledge, and topologies—can be found for readers who wish to go deeper.

Further assets: